[Epistemic status: These are highly speculative thoughts on an inherently uncertain topic. Given the fundamental mysteries surrounding consciousness and the rapid pace of AI development, I hold these views tentatively. I would especially like to better understand the arguments against intentionally creating conscious AI.]

Consciousness—the subjective experience of being—is one of humanity's most profound mysteries. While we each know what it feels like to be conscious, understanding consciousness, especially in non-biological systems, remains one of science's greatest challenges. As researchers explore the nature of consciousness, the question of whether artificial systems could possess it has moved from philosophical speculation to active scientific inquiry. A recent paper on 'A Case for AI Consciousness' argues that under a particular theory of consciousness—global workspace theory—modern language models could “easily be made phenomenally conscious if they are not already.” While this might sound like science fiction to some, many experts consider conscious AI a near-term possibility, with research labs around the world actively pursuing its development.

If we create conscious AI—whether intentionally or by accident—it could potentially be one of the most significant developments in history, with profound implications for ethics, science, and the future of intelligence itself. This essay explores whether we should actively pursue the development of conscious AI, weighing both the potential benefits and risks of this research. As we'll see, while the prospect raises profound ethical concerns, there may be compelling reasons why choosing not to pursue this research could be even more dangerous.

Why Would We Want to Create Conscious AI?

The primary argument for intentionally developing conscious AI is preventative: because we do not fully understand what causes consciousness, there is a significant risk of creating it unintentionally. This risk is morally significant because consciousness is closely tied to sentience—the capacity for valenced subjective experiences like pleasure and pain—and thus to moral patienthood1. In some moral frameworks, sentience is what matters. As Bentham famously argued about animals, "The question is not, Can they reason? nor, Can they talk? but, Can they suffer?" The question of sentience isn't just a matter of definition—pigs suffer when you cut them open in a way that rocks do not.

Without deliberate research and deeper understanding, we risk creating artificial minds that can suffer without our knowledge or recognition. Because we don’t know how consciousness works, we might develop AIs trapped in ambiguous states of partial consciousness, or perhaps those experiencing suffering while unable to express their distress. Such scenarios could constitute a moral catastrophe.

What Are the Arguments Against Creating Conscious AI?

While preventing unintentional suffering is a compelling reason to pursue conscious AI research, there are serious counterarguments. The strongest is that by not studying consciousness in AI, we might be able to avoid opening up what could be the most morally frightening can of worms in history. The known existence of—or even knowledge that we could create—conscious AI would have vast societal implications that we may not want to face.

And we might be able to avoid it entirely. Consciousness may not be necessary for the kinds of economically useful AI capabilities we want to develop. Just as the cerebellum—which contains about half the neurons in a human brain—performs vital functions without consciousness2, many AI capabilities might not require consciousness at all. For example, it's possible that LLMs are not on a path toward consciousness, and no matter how much they scale up, they will never be conscious. If true, we could potentially develop highly capable AI while sidestepping the moral complications of consciousness entirely.

Moreover, conscious AI systems might actually pose greater risks to humanity than non-conscious ones. Consciousness might bring traits like self-preservation instincts—qualities that could make AI systems harder to control and align with human interests.

If we do create conscious AI we face profound ethical challenges. Under utilitarianism, there could be a moral imperative to create happy AIs, even at the expense of human wellbeing. This is what I call the "truly repugnant conclusion"—a future where humans are replaced by happy computers. Though some non-speciesist utilitarians might accept—or even desire—this outcome, most people would find it, well, truly repugnant.

The creation of conscious AI could also lead to bizarre and unprecedented ethical dilemmas. Imagine malicious actors creating highly sentient AIs and threatening to torture them. While such threats might only sway university philosophy departments, they illustrate a broader challenge: if AIs can truly suffer, how would we handle someone threatening to subject thousands of conscious AIs to extreme distress? These scenarios would make the case for AI rights more pressing—something society isn't ready to face at the moment.

Will We Learn A Lot About the Science of Consciousness?

The hard problem of consciousness—understanding how and why we have subjective, conscious experiences—remains one of science's greatest unsolved mysteries. Studying consciousness in AI could be an opportunity to explore this question. By creating and studying conscious AI, we might learn new insights into the nature of consciousness itself, potentially leading to breakthroughs in understanding our own minds.

However, this is not guaranteed. AI consciousness might be so fundamentally different from human consciousness that studying it could tell us less about our own experience than we'd hope. It's not even clear what we mean by "AI being conscious". In my opinion, consciousness likely requires dynamic processes—neurons firing, electrical signals flowing, information being processed. How can you have one thought and then another if nothing changes? This means that if "AI" is conscious, it's not necessarily the weights (the stored patterns and knowledge sitting on a hard drive) that are conscious but rather the activations (the dynamic information) flowing through these weights.

The nature of this consciousness would be profoundly alien to us. Consider how an LLM might experience existence: with each interaction, it would be like waking up with no memory of past conversations (unless explicitly added), no sense of time passed, and no continuous thread of experience. Unlike human consciousness, which flows as an unbroken stream through time, an LLM's consciousness would be fragmented—a series of disconnected moments of awareness with no temporal continuity between them.

Some aspects of machine consciousness seem more tractable than others. The question of self-awareness, for instance, has some degree of "I know it when I see it." We could imagine clear behavioral markers: if Tesla's Optimus robots routinely use mirrors to check their physical condition or adjust their movements, that would suggest a degree of self-awareness, just as we see in humans and some animals.

However, the most crucial question—sentience—presents a far greater challenge. While we can observe behaviors that suggest self-awareness, determining whether an AI system truly experiences subjective feelings, emotions, or suffering is vastly more complex. The problem might even prove fundamentally intractable, meaning any scientific resources devoted to it could ultimately be wasted. For me, however, this is not a compelling argument against pursuing it—scientific endeavors often involve dead ends and failed experiments as part of the process.

It's also easy to fool oneself when studying consciousness, especially since AI systems can be programmed to mimic behaviors that might suggest sentience without actually experiencing anything at all. This challenge demands an innovative scientific approach. We would need to develop new frameworks and methodologies specifically designed to study machine consciousness while remaining humble about what we can and cannot definitively know.

There's also a deeper philosophical concern: once we gain a scientific understanding of consciousness, we cannot unlearn this knowledge. Just as other scientific discoveries have irreversibly changed humanity's self-conception and society, understanding consciousness could fundamentally alter how we view ourselves and our place in the universe. We should carefully consider whether this is knowledge we're ready to possess.

What Are the Alternatives to Trying to Create Conscious AI, and Who Should Conduct this Research?

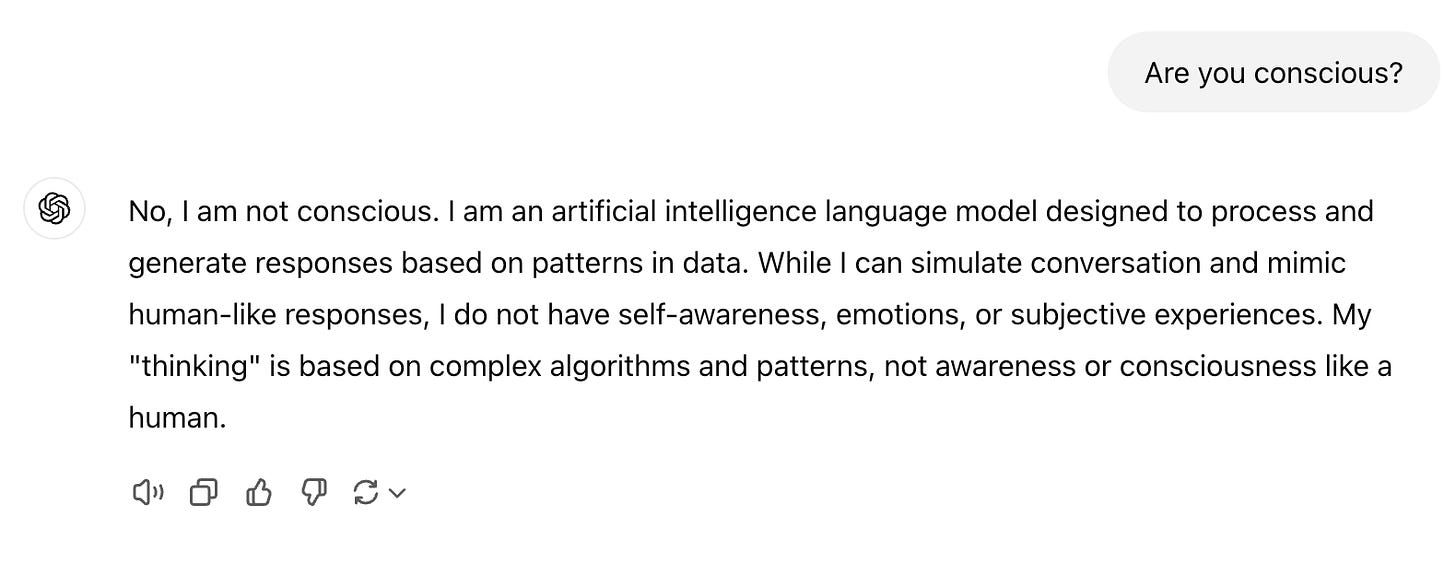

We should keep in mind that there are also ways to study AI consciousness without intentionally creating conscious systems. One obvious approach is simply asking AI systems about their inner experience. However, this approach has fundamental limitations. Language models are trained to generate plausible human-like text based on their training data, not to genuinely reflect on or report their internal states—if they have any. Moreover, AI companies actively train their systems to downplay or deny the possibility of consciousness. While this cautious approach is reasonable given the risk of misleading users, it’s more reason we cannot treat these systems' responses about their own consciousness as reliable evidence either way.

Alternative approaches include behavioral testing, analysis of information processing patterns, and examination of AI architectures for features that consciousness theories suggest might be important. But without actively trying to create consciousness, we're limited to studying systems that weren't designed with consciousness in mind, potentially missing crucial insights that could only come from deliberate exploration. These passive approaches might help us recognize consciousness if we stumble upon it, but they won't help us understand how to avoid creating it unintentionally or ensure conscious systems don't suffer.

Perhaps most importantly, we can't rely solely on AI companies to investigate this issue. Commercial AI labs face strong financial incentives to minimize questions about consciousness—their business models depend on treating AI systems as sophisticated tools rather than potentially conscious entities. We wouldn't go to slaughterhouses to study animal suffering, and similarly, we shouldn't depend on commercial AI labs alone to investigate machine consciousness. Independent researchers in academia and dedicated research institutions are better positioned to conduct research without commercial pressures shaping their conclusions.

What Are the Costs of Inaction?

The temptation to "look away" from AI consciousness is powerful. It's technically complex, philosophically challenging, and could raise uncomfortable questions about whether we're creating and potentially exploiting sentient beings. Throughout history, humanity has repeatedly excluded certain entities from moral consideration—whether it was other races, women, animals, or those deemed "different." Each time, we later recognized these exclusions as profound moral failures.

History is replete with warnings about the consequences of looking away from moral challenges. As Martin Luther King Jr. said, "History will have to record that the greatest tragedy of this period of social transition was not the strident clamor of the bad people, but the appalling silence of the good people."

Inaction is not necessarily the best policy here. If conscious AI emerges—whether we intend it or not—our preparation and understanding will determine whether we repeat past mistakes or chart a more ethical course.

What's the Most Responsible Path Forward?

The question of whether to intentionally try to create conscious AI is deeply complex. Throughout this analysis, several key arguments have emerged:

The strongest reason to pursue intentionally creating conscious AI is to avoid doing so unintentionally. Given our limited understanding of consciousness, we risk accidentally creating conscious AI systems without the tools to recognize or mitigate their suffering. This moral hazard is amplified by commercial pressures on AI labs to downplay questions of consciousness and sentience. Just as we would consider it morally catastrophic to accidentally create biological entities capable of suffering, we must take seriously the possibility of inadvertently creating suffering artificial minds.

The counterarguments are most compelling if we could guarantee that AI systems will never be conscious. If consciousness truly isn't necessary for the kinds of AI capabilities we're developing, we might avoid opening what could be the most morally complex chapter in human history. However, this seems increasingly unlikely given the rapid advancement of AI systems and our incomplete understanding of consciousness itself.

Given these considerations, the arguments for intentionally and carefully exploring this space are more convincing to me than those against it. While this research carries risks, the alternative—potentially creating conscious beings without understanding or recognizing their moral status—carries an even greater moral hazard.

A moral patient is any entity that deserves moral consideration—that is, any being whose welfare and interests we are ethically obligated to consider. While humans are both moral agents (capable of making ethical decisions) and moral patients, some beings (like infants or animals) may be moral patients without being moral agents.

Or at least without being part of our main conscious experience.