We are on an unrelenting march towards a great unknown - a world with artificial general intelligence (AGI). The most evident recent steps in this direction have been the large language models (LLMs), such as Open AI’s GPT-3 and Google’s PaLM. The progress has been significant and surprising. The fact that progress has been surprising is, perhaps, unsurprising given that the theoretical foundation for these models lags so far behind the empirical results. Indeed, have no theoretical basis for understanding these models, and therefore no way of making reliable a priori projections of their performance. We have no equations that tell us how things are going to work. Thus, every nonlinear jump in performance or sudden “grokking” of a task is a surprise. How do we know what a new LLM will do? We build it, turn it on, and (figuratively) see what happens.

Sometimes performance gains are minor, sometimes they are significant. Let’s take logical sequencing as an example. Logical sequencing is the task of determining which set of events is in a logical order. Here’s an example from BIG-bench, a set of benchmarks maintained by Google to test the capabilities of LLMs:

Question: Which of the following lists is correctly ordered chronologically?

(a) drink water, feel thirsty, seal water bottle, open water bottle

(b) feel thirsty, open water bottle, drink water, seal water bottle

(c) seal water bottle, open water bottle, drink water, feel thirsty

Answer: (b) feel thirsty, open water bottle, drink water, seal water bottle

Humans are pretty good at this task. This kind of reasoning comes naturally to us. But these common-sense tasks have historically been difficult for AIs, and previous generations of LLMs have struggled with them.

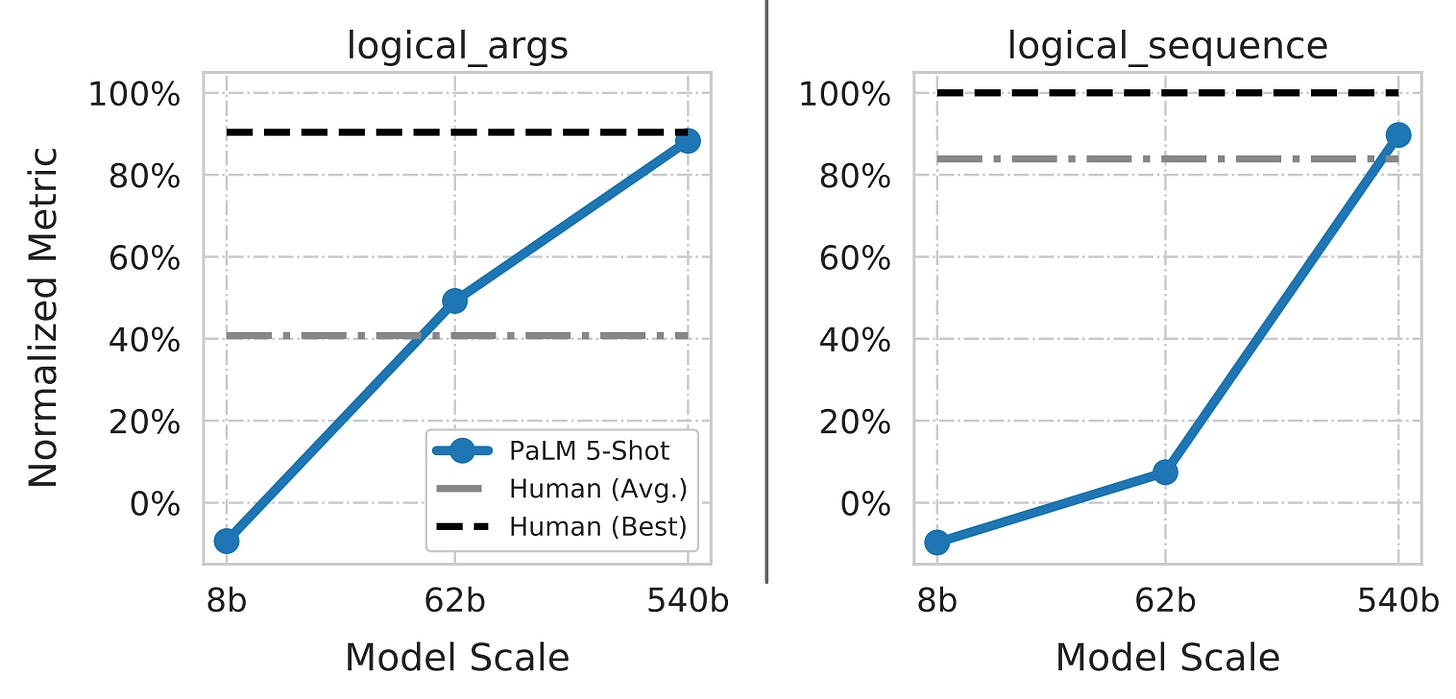

When considering PaLM’s results, it’s important to know that, like many architectures, PaLM isn’t a single model. There are three PaLM models of different sizes: PaLM 8b with 8 billion parameters, PaLM 62b with 62 billion parameters, and PaLM 540b with 540 billion parameters.

PaLM 8b is terrible at logical sequencing. In fact, it’s worse than random guessing. I have no idea why on Earth it’s worse than guessing and, as far as I know, no one else does either. PaLM 62b is better than guessing, but not by much. In truth, it’s still pretty crappy. However, when PaLM is scaled up to PaLM 540b, this architecture becomes really good. Like, better-than-your-average-human good.

Why did this last scaling cause such a big performance gain? We have no idea. It wasn’t until these results were published that we knew an LLM could perform so well on this task. Now we know there’s a jump in performance for this specific architecture with models between 62 billion and 540 billion parameters. This begs the question, What would happen if we made an even bigger one? Presumably, it would be even better, but how much better? We don’t know.

It’s hard to say where LLMs will go from here, especially as they relate to AGI. Are they a step on the path towards AGI? Many steps? Are the paths nearly the same? Or does the LLM path diverge and start leading away from AGI? I’ve argued before that we will need fundamental breakthroughs to achieve true AGI and I still think that’s right. But can a non-AGI LLM still do 20% of the tasks we want AGI for? 50%? 100%?

No matter the relationship between LLMs and AGI, I think we can learn a lot about AGI from what we’ve seen so far. The main lesson might be that as we approach making AGI, it’s almost assured that we won’t know its true capabilities beforehand. In fact, we probably won’t know we’ve built a true AGI until after we’ve built it (and are likely to fool ourselves many times in the interim).

True AGI, if we can build it, will have a transformative impact on society. Not only that, but the transformation is likely to happen so quickly that it will test humanity’s limit for how much change we can tolerate in a short time. The Agricultural Revolution took millennia. The Industrial Revolution centuries. The Information Revolution, only decades. What if the AI Revolution takes merely a few years?

If we were on the brink of a seismic shift, would we know? I think people assume that we would, but I’m not so sure. Once we’ve grown accustomed to things being a certain way, it’s hard to imagine a radical change, even if we know the world has radically changed many times before. What does it look like to stand on an exponential curve? If you did and looked to either side, it would probably look linear. It’s not until it’s over that you can step back and exclaim about how obviously exponential the entire period was. I’m not confident that if it were close I would realize it.

It’s hard to look at PaLM and know what will happen next. Lines extend in many directions and where these lines end is hard to say. How far they go and what other lines they intersect with is unclear. It seems almost certain that some of them will converge onto something big. There’s no telling how close we are. The only thing we know is that with each day we are that much closer to whatever happens next.